Preface

To help establish an early foundation, it is important to understand these 5 common algorithms often seen in rate limiting.

If you are not familiar with what a rate limiter is, check out this "Easy" article I previously wrote: Rate Limiters Made Easy For quick review, I discussed the Token Bucket rate limiting algorithm here

This is part of my series on learning how to pass system design interviews.

Here are the 5 Most Commonly Seen Rate Limiting Algorithms in Real Production Environments

- Token Bucket - Learn

- Leaking Bucket - Learn

- Fixed Window Counter - Learn

- Sliding Window Log - Learn

- Sliding Window Counter - Learn

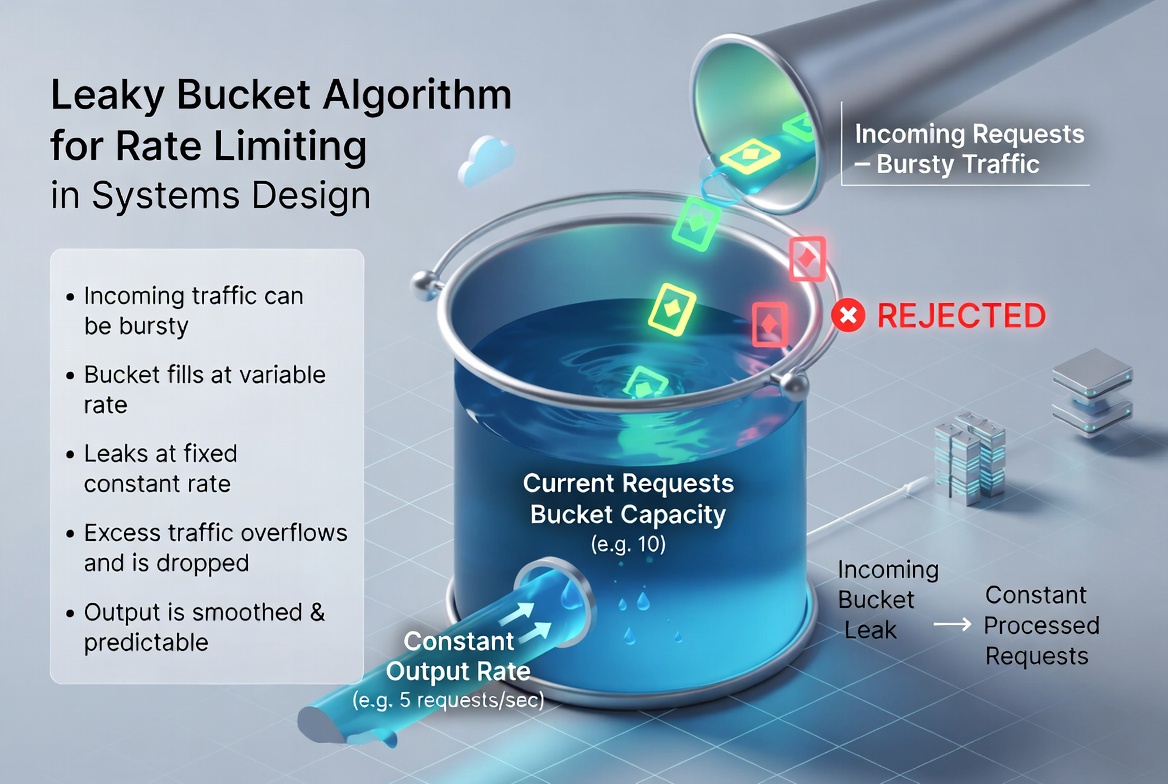

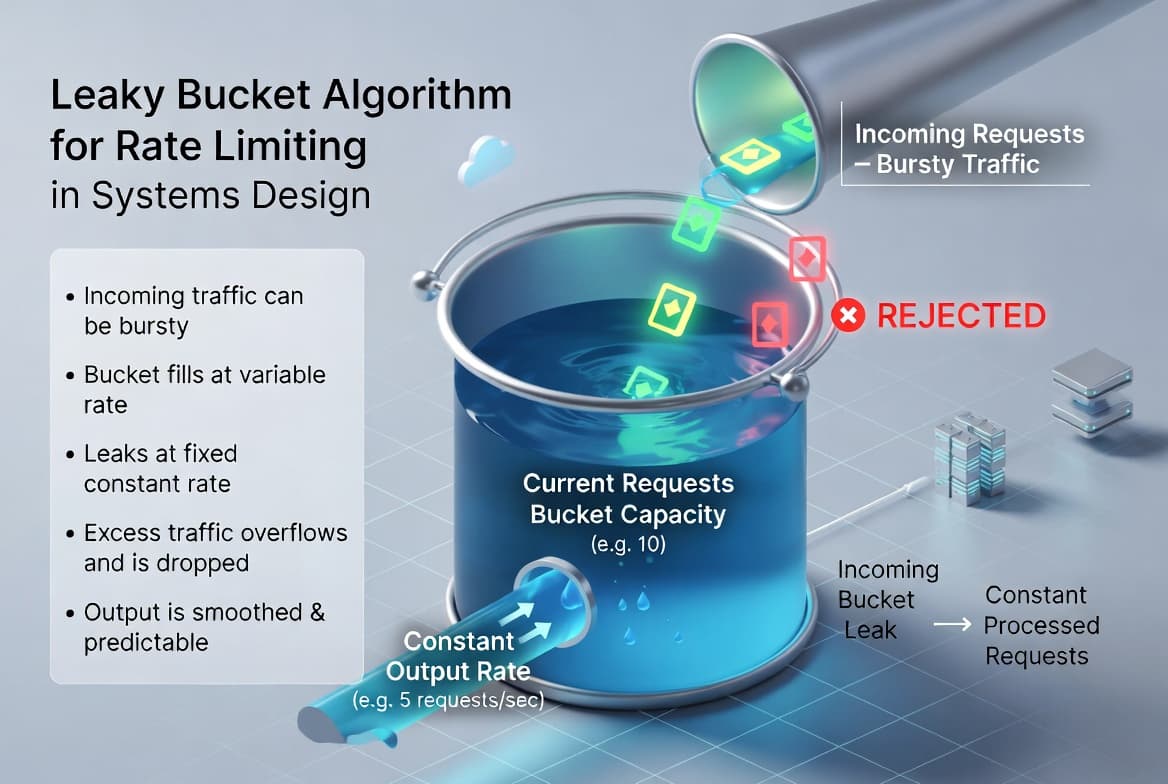

Leaking Bucket

By now you should have familiarized yourself with the token bucket algorithm. The leaking bucket resembles similar behavior.

If you don't remember, the token bucket has a fixed size of coins, let's say 4, and a replacement of 2 coins every second. If the token bucket is full, those 2 replacement coins will keep overflowing. If you exhaust the token bucket before it has time to refill from a rapid series of spam messages, you will hit your rate limit, and any requests made while the token bucket doesn't have coins, is dropped.

The leaking bucket works almost the exact same way.

However, with the leaking bucket, you cannot send a rapid burst of spam messages. The requests are processed at a "fixed-rate". Therefore it's easy to remember if you see requests as leaking at a fixed pace, like water dripping from a bucket.

How it's implemented

The most common way is to use a Queue, with it's FIFO (First-In-First-Out) behavior. Here's the chain of thought, from sent to processed.

- You send a request, the system checks if queue is full. If it is not full, the request is added to the queue.

- Else, the request is dropped.

- Requests are pulled at a fixed rate from the queue and processed. Think 1 request per second.

There are two parameters for this algorithm, which almost exactly matches token buckets parameters:

- The bucket size (4 coins)

- Outflow rate (1 coin per second)

In real production environments, you should determine how many "buckets" you need for different API endpoints. Here are some examples of when you could integrate leaking bucket.

- Smoothing Traffic Spikes: It is ideal for stabilizing burst incoming traffic into a steady, predictable flow to prevent overwhelming downstream services or database. For example, handling Black Friday checkout surges.

- Managing Third-Party API Limits: You can use it to ensure your system never exceeds the strict fixed-rate quotes (e.g., 5 requests per second) required by external vendors. For example, fetching Google Maps location data.

- Asynchronous Background Tasks: It works perfectly for decoupling request arrival from processing, allowing the system to handle expensive tasks like video encoding or report generation at a consistent pace. For example, resizing profile photo image uploads.

Conclusion

In conclusion, a leaking bucket has a certain amount of coins and a fixed outflow rate, determined by your specific implementation of the algorithm. A request is only dropped when the queue is full.

Leaking bucket is memory efficient considering a fixed size queue at any given time and requests are processed at a fixed rate, great for situations when a stable outflow is necessary. The downside is a burst of traffic fills up the queue with old requests, and if not processed quickly, recent requests are rate limited. Once again, like the token bucket, the difficult part is fine-tuning the outflow rate and the fixed bucket size.

For the sake of time and proper learning retention, I will discuss the rest of the algorithms in future blogs.

In my next blog, I will discuss the other 3 most common rate limiting algorithms.

Summary

Thank you for reading my blog post!

To continue learning the fundamentals of System Design, the next important fundamental to learn is understanding...

Make sure to check out the additional blogs here for materials to help you throughout your learning journeys!

Credit: ByteByteGo - Design a Rate Limiter