Preface

By this point you should have read my previous article, Rate Limiter, and have a good understanding of the 5 most common algorithms.

If you are not familiar with what a rate limiter is, check out this "Easy" article I previously wrote: Rate Limiters Made Easy

This is part of my series on learning how to pass system design interviews.

Here are the 5 Most Commonly Seen Rate Limiting Algorithms in Real Production Environments

- Token Bucket - Learn

- Leaking Bucket - Learn

- Fixed Window Counter - Learn

- Sliding Window Log - Learn

- Sliding Window Counter - Learn

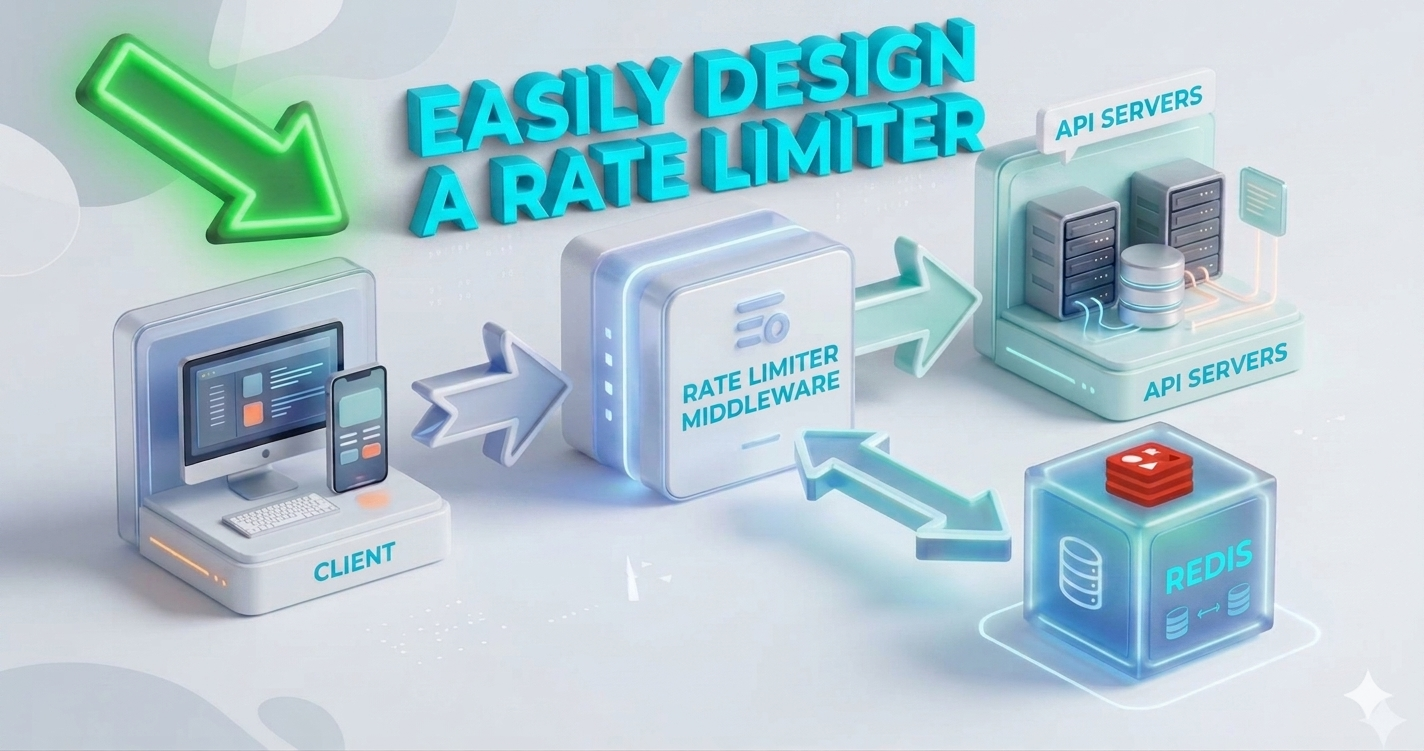

High-Level Architecture

In this deep dive, we’re exploring how to build a production-grade rate limiter, a critical component for protecting your services from abuse and system failure. We will break down the high-level architecture using Redis, tackle the complexities of distributed synchronization, and analyze how to handle race conditions and performance optimization at scale.

As you should now understand, the basic idea of rate limiting is to use a counter to track requests from a specific user, IP address, or device. At its core, if the counter is larger than the allowed limit, the request is disallowed.

To ensure high performance, we store these counters in an in-memory cache like Redis rather than a disk-based database. If you remember from the back-of-the-envelope calculations latency chart, reading from disk is a slow operation. [Disk = Milliseconds(0.001) | Memory/RAM = Microseconds(0.000001)]

Redis is ideal because it is fast and provides two essential commands for this pattern: INCR to increase the counter and EXPIRE to automatically delete it once a time window closes.

- Here's how the typical data pattern emerges.

- Client sends requests to the middleware which is designed to be rate-limiting middleware.

- There are multiple buckets within Redis. Redis is a software that works in RAM. When the request is sent, the rate limiting middleware is activated! The rate-limiting middleware now goes within the corresponding Redis bucket and checks if the limit is reached or not.

- If the limit is reached, the request is rejected.

- If the limit is not reached, the request is sent to the API servers. The system increments the counter and saves the updated count within Redis.

This completes the high level overview of a rate-limiter. Next, I will discuss how to manage the data flow.

Deep Dive: Managing the Data Flow

The high level architecture in the previous infographic has left out crucial details which readers should know before proceeding. Questions you should ask are:

- How are rate limiting rules created?

- Where are they stored?

- How do I specifically handle requests that are rate limited?

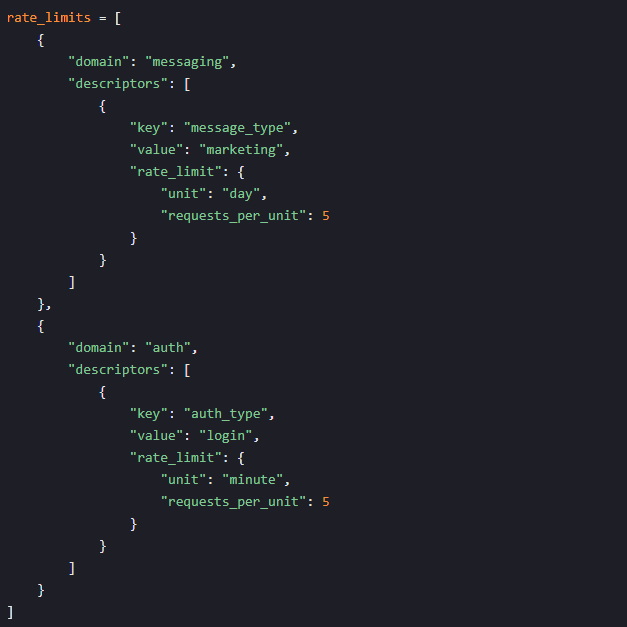

Rate Limiting Rules: Rules define our thresholds (e.g., 5 login attempts per minute).

- In the first example, we are looking at a marketing rate-limiter middleware. The system rules are configured to allow a maximum of 5 marketing messages per day.

- In the second example, we are looking at authentication rate-limiter middleware. The rules are established so that no client can try to login more than 5 times in one minute.

Where are they stored: These "rules" are typically written in configuration files and stored on disk, then pulled into a cache by workers for fast access. So we have now answered #1 and #2.

- How do I specifically handle requests that are rate limited:

Exceeding the Limit: When a user hits their limit, the API returns an HTTP 429 (Too Many Requests). Depending on the design, these requests are either dropped immediately or queued for later processing.

Client Feedback (Headers): We use HTTP response headers to keep the client informed.

X-Ratelimit-RemainingThe remaining number of allowed requests within the windowX-Ratelimit-LimitLimit indicates how many calls the client can make per time windowX-Ratelimit-Retry-AfterThe number of seconds to wait until you can make a request again without being throttled

Lyft Open Source Rate-Limiting Component

When a user sends too many requests, a 429 error code is sent back. This indicates a too many requests error and X-Ratelimit-Retry-After header are returned to the client.

Detailed System Design for Rate Limiter

- As discussed previously, rules are stored in disk. Workers will frequently pull from this disc storage and store them in cache.

- When client sends request to the server, the request first has to go through the guard at the gate, the rate limiter middleware.

- The rate limiter middleware loads the rules from the cache. Then it fetches counters and last request timestamps from Redis cache. Based on the response, the rate limiter now chooses to:

- If not rate limited, forward to API servers

- If rate limited, the rate limiter middleware sends a 429 too many requests error to the client.

- While this happens, depending on your setup, the request is dropped or forwarded to the message queue shown on the chart.

By now, you should have gone over this chart with a fine-toothed comb and have a general understanding of the dataflow.

Next, we will discuss some of the challenges of scaling a rate limiter beyond a single server environment.

The Challenge of Distributed Systems

Scaling a rate limiter to millions of users across multiple servers introduces two critical design hurdles:

- Race Conditions: In high-concurrency environments, two threads might read the same counter value before updating it.

- Initial State: Redis holds a counter value of 3.

- Concurrent Reads: Thread A and Thread B (on different servers) both read the Redis key at the same millisecond; both receive 3.

- Local Validation: Both threads independently evaluate the logic

3 < 5. Since this is true, both determine the request is allowed. - Redundant Increments: Thread A sends a command to update the counter to 4. Almost simultaneously, Thread B sends the same command to update the counter to 4.

- Rate Limit Breach: Redis reflects a final value of 4, but 5 total requests have been processed, effectively bypassing the intended limit.

- Synchronization: Since web tiers are stateless, a client might hit different rate limiter servers for each request.

Race Condition Example

Solution

If you are familiar with race conditions, locks may be the most obvious way to solve this problem. However, to solve this without slowing down the system with heavy locks, we use Lua scripts or Redis sorted sets data structure. I would encourage reader's to research these two alternative methods.

The next design hurdle is synchronization across multiple servers.

What NOT TO DO

Sticky Sessions (Left Panel): This represents a configuration where a specific client is always routed to the same rate limiter instance. While this makes tracking easy, it's often avoided in modern distributed systems because it doesn't scale well and creates a single point of failure for that user's session.

Non-Sticky Sessions (Right Panel): This illustrates a common distributed environment. Requests from Client 1 might hit Rate Limiter 1 first, and then Rate Limiter 2 for the next request. Without a shared data store (like Redis), these limiters won't know the client's total request count, leading to inconsistent enforcement.

What TO DO

Using a centralized data store like Redis ensures all servers share a single source of truth for every user's counter.

Performance & Monitoring

Performance Optimization

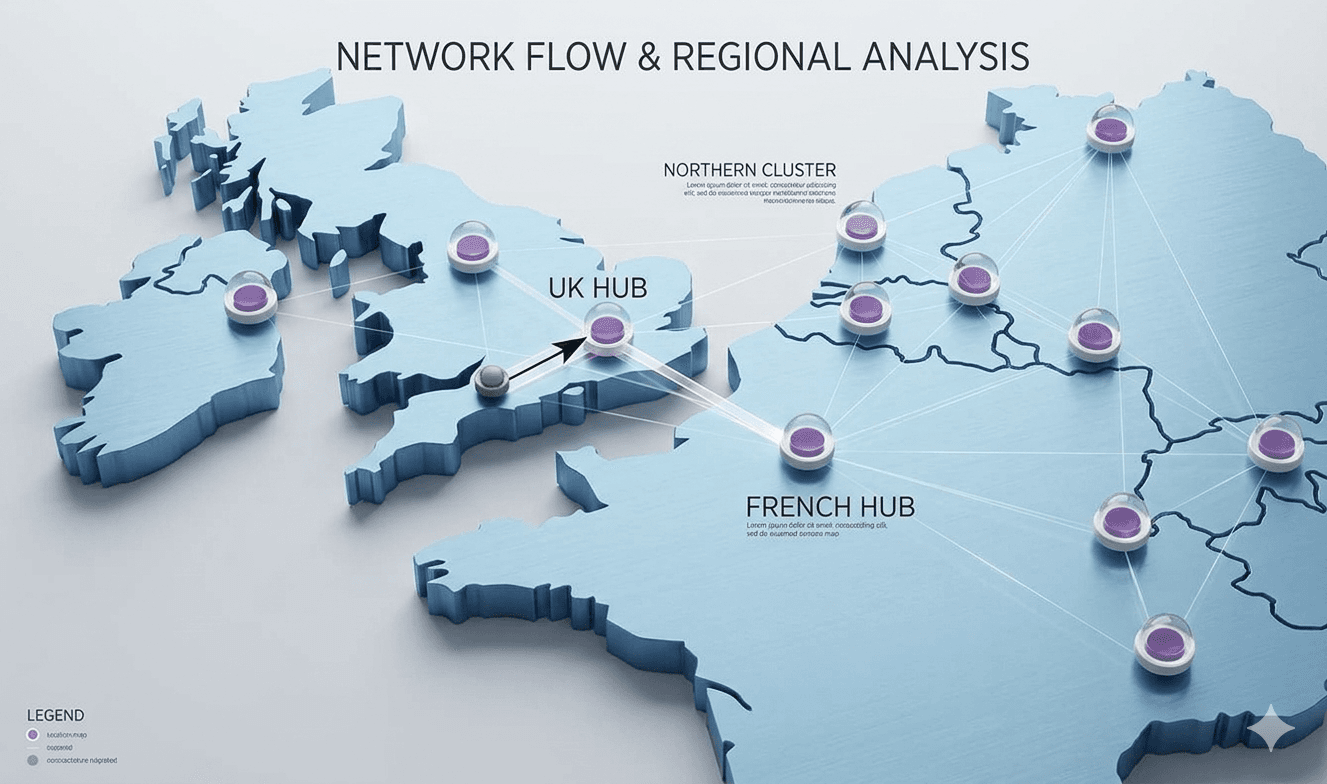

To optimize performance, we deploy edge servers globally so traffic is routed to the closest location, reducing latency. Additionally, we use an eventual consistency model to synchronize data across data centers.

Multi data-center setup is crucial for rate-limiting because latency causes issues for people located farther away. Many cloud services have built edge server locations around the world.

Monitoring

Once the rate limiter is setup, monitoring is vital. If rules are too strict, valid users are dropped; if too lax, the system crashes during traffic bursts. In scenarios like flash sales, we might even swap algorithms to something like a Token Bucket to better support bursty traffic. The main goal is to determine if the rate limiting algorithm and the rate limiting rules are effective.

Conclusion

Designing a production-grade rate limiter requires balancing speed, accuracy, and scalability. Throughout these blogs, we’ve explored various algorithms. These included Token Bucket, Leaking Bucket, Fixed Window, Sliding Window Log, and Sliding Window Counter with each offering unique trade-offs in complexity and precision.

We did a deep dive into designing a rate limiter today. We discussed the system architecture, rate limiting in a distributed environment with multiple servers, performance optimization, and monitoring.

There are additional talking points to be aware of when discussing a rate limiter and if time allows.

- Hard vs soft rate limiting

- Hard is when the number of requests cannot exceed the threshold.

- Soft is when the requests can exceed the threshold for a certain period.

- Rate Limiting is not restricted to one part of the stack; it can be applied at various layers of the OSI model.

- Application Layer (Layer 7): This focuses on HTTP requests and is the primary method discussed in the chapter.

- Network Level (Layer 3): Rate limiting can be enforced by IP address using tools like Iptables.

- The OSI Framework: The system relies on a 7-layer hierarchy

- Layer 7: Application (e.g., HTTP)

- Layer 6: Presentation

- Layer 5: Session

- Layer 4: Transport

- Layer 3: Network (e.g., IP)

- Layer 2: Data link

- Layer 1: Physical

- Design your client with best practices to avoid being rate limited.

- Implement caching to avoid frequent API calls.

- Understand your designated limits by your rules, and don't send too many requests in a short time frame.

- Gracefully recover from errors and catch exceptions so your client can operate optimally.

- You should implement testing, so ensure you add sufficient back off time.

Congratulations! You have now completed the rate-limiting deep dive. To maximize your learning, you should take a few days break after learning this and then revisit it later.

Summary

Thank you for reading my deep dive on rate limiter design!

To continue learning the fundamentals of System Design, make sure to check out the additional blogs here for more deep dives into scalable architecture.

Credit: ByteByteGo - Design a Rate Limiter